© 2023 yanghn. All rights reserved. Powered by Obsidian

4.7 前向传播、反向传播和计算图

要点

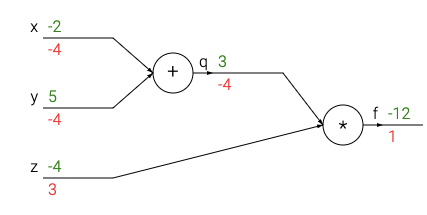

1. 向前传播

按顺序(从输入层到输出层)计算和存储神经网络中每层的结果。

# set some inputs

x = -2; y = 5; z = -4

# perform the forward pass

q = x + y # q becomes 3

f = q * z # f becomes -12

2. 向后传播

按照链式法则,每个计算单元都可以计算 loss 基于当前变量的偏导数(上图红色部分)

# perform the backward pass (backpropagation) in reverse order:

# first backprop through f = q * z

dfdz = q # df/dz = q, so gradient on z becomes 3

dfdq = z # df/dq = z, so gradient on q becomes -4

dqdx = 1.0

dqdy = 1.0

# now backprop through q = x + y

dfdx = dfdq * dqdx # The multiplication here is the chain rule!

dfdy = dfdq * dqdy

举例

向前传播:

x = 3 # example values

y = -4

# forward pass

sigy = 1.0 / (1 + math.exp(-y)) # sigmoid in numerator #(1)

num = x + sigy # numerator #(2)

sigx = 1.0 / (1 + math.exp(-x)) # sigmoid in denominator #(3)

xpy = x + y #(4)

xpysqr = xpy**2 #(5)

den = sigx + xpysqr # denominator #(6)

invden = 1.0 / den #(7)

f = num * invden # done! #(8)

# 向后传播:

# backprop f = num * invden

dnum = invden # gradient on numerator #(8)

dinvden = num #(8)

# backprop invden = 1.0 / den

dden = (-1.0 / (den**2)) * dinvden #(7)

# backprop den = sigx + xpysqr

dsigx = (1) * dden #(6)

dxpysqr = (1) * dden #(6)

# backprop xpysqr = xpy**2

dxpy = (2 * xpy) * dxpysqr #(5)

# backprop xpy = x + y

dx = (1) * dxpy #(4)

dy = (1) * dxpy #(4)

# backprop sigx = 1.0 / (1 + math.exp(-x))

dx += ((1 - sigx) * sigx) * dsigx # Notice += !! See notes below #(3)

# backprop num = x + sigy

dx += (1) * dnum #(2)

dsigy = (1) * dnum #(2)

# backprop sigy = 1.0 / (1 + math.exp(-y))

dy += ((1 - sigy) * sigy) * dsigy #(1)

# done! phew